Field Notes from Sabah: Rethinking How We Certify Learning

What a board, markers, and three questions taught us about measuring real change

The Challenge

How do you measure the impact of learning? More specifically, how do you verify that a capacity building program actually created change?

As Community Manager at GainForest, I have been wrestling with this question, especially for capacity building programs. Based on our Bumicerts Version 1, we realized a gap: some communities report one-off programs, but it was hard to verify that changes were actually made. We see participant counts, training hours, topics covered—all important metrics that show reach. But do they show transformation?

This question took me to Semporna, Sabah, working with teens at Universiti Alternatif, run by Borneo Komrad. My goal was to develop data standards that could capture whether capacity building programs actually shift what people know, feel capable of doing, and practice.

The Method: Keeping It Simple

I spent four days working alongside facilitators running capacity building sessions at Universiti Alternatif. The sessions covered:

Documenting Local Ecological Knowledge

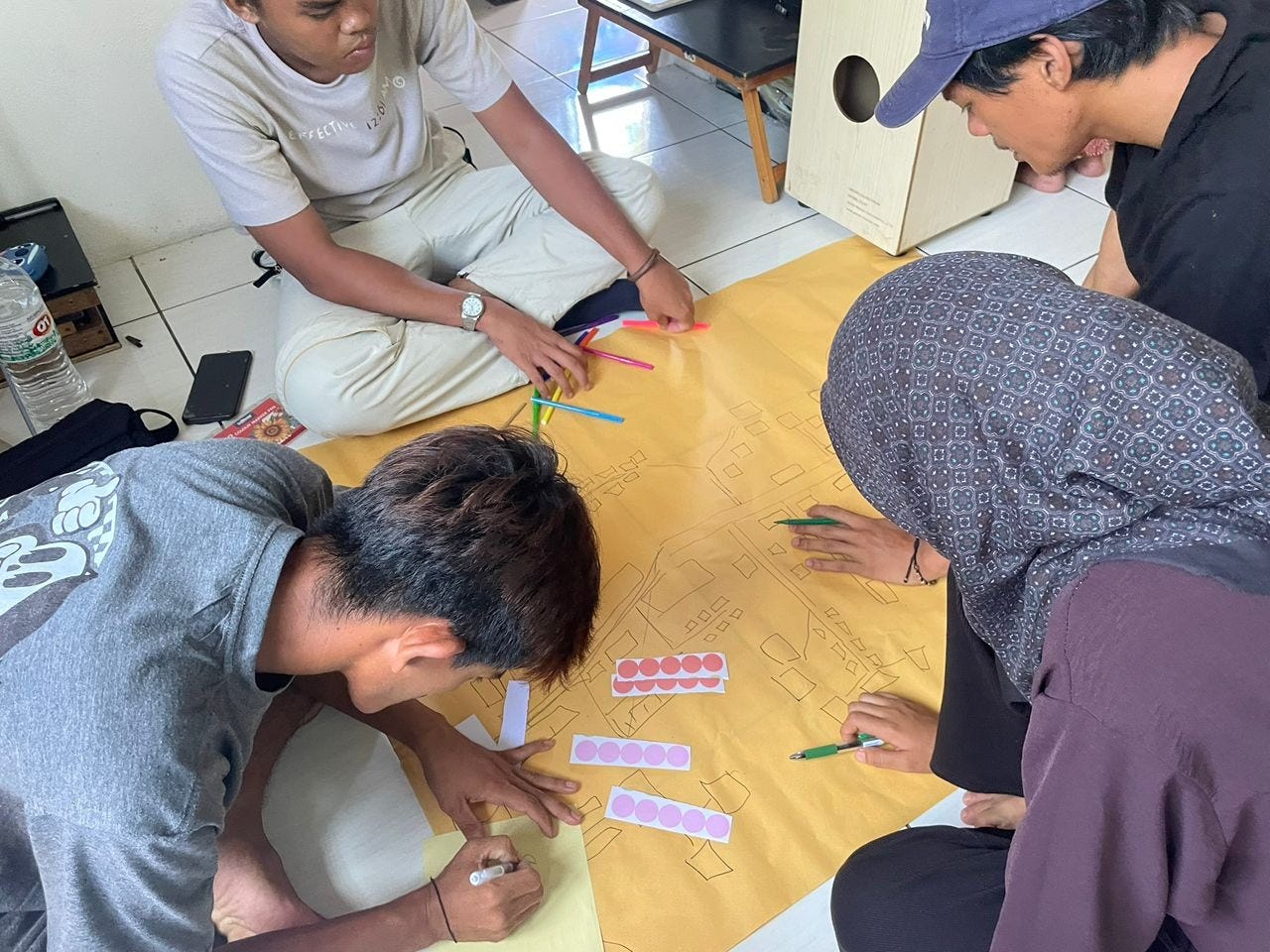

Disaster Preparedness Mapping

Telling Environmental Stewardship Impact Stories

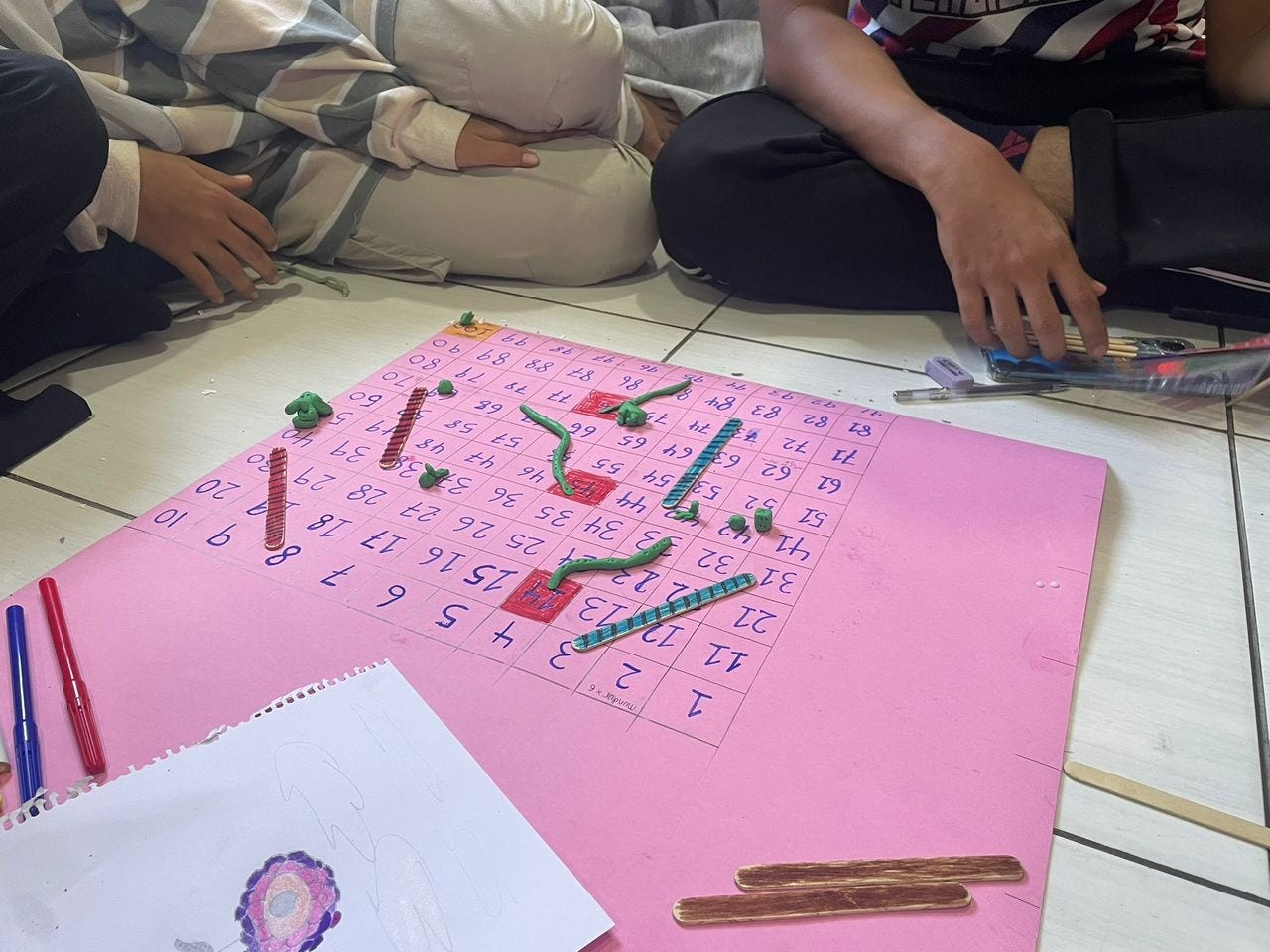

Gamifying Project Management

My overarching goal was not to deliver the training myself, but to research: could we develop data standards that capture whether these capacity building programs actually create change?

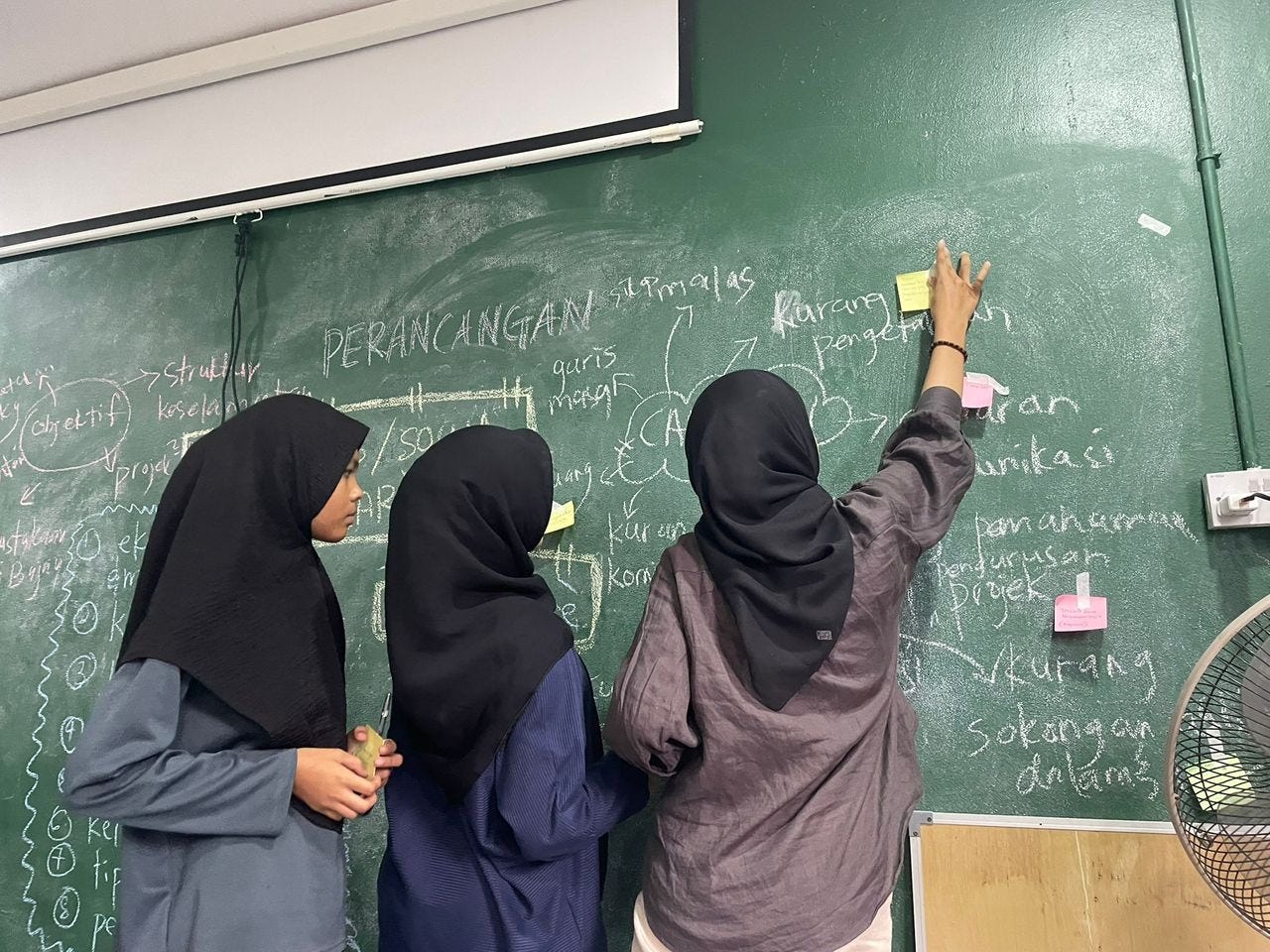

I went in with the simplest possible approach: a board, markers, and three questions asked before and after each session.

KNOW: What do you understand or know about this?

CAN: What do you feel capable of doing?

DO: What have you actually done or practiced?

Why so simple? A few reasons emerged from thinking about the communities I have been working with:

Forms might not be suitable—participants are not used to formal assessments

Too many questions would overwhelm

We needed qualitative insights, not just numbers

The method had to be replicable with minimal resources

The framework came from recognizing that change happens across multiple dimensions: understanding something (knowledge), feeling you could do it (confidence), and actually doing it (practice). All three matter.

What I Discovered: From “Not Sure” to Confidence

Working with teens at Universiti Alternatif revealed something unexpected. When we started Session 2 on disaster preparedness mapping, and asked “What can you do?” the response was “not sure” (uncertain).

These were teens who had directly experienced disasters. Nine out of fifteen had survived fires. Their community had built two fire extinguisher stations through external partnerships. They attended safety training. They were doing disaster preparedness work.

But they felt uncertain about their capabilities.

Two hours later, after learning basic mapping techniques, those same teens had created three complete community maps and identified risk zones (fire-prone areas, flood zones, even areas where they risk arrest due to their stateless status) and mapping community resources (who can help, where to find food, escape routes).

The same pattern emerged in Session 3. “Not sure” when asked about their capabilities in reporting environmental impact. Yet they were already running Re:Laut, a cleanup project in their village. They were already weighing collected trash (occasionally). They were making social media videos about their work. They even built garbage bins in the school neighborhood because there’s no proper waste management system in the floating village area.

They just did not have a framework to recognize and articulate what they were already doing.

Once we introduced the STAR framework (Situation, Task, Action, Results), three groups immediately wrote impact stories about their projects: environmental cleanup, economic self-reliance initiatives, and literacy programs. They said with confidence that they could use the framework.

What This Revealed

The “not sure” to confidence pattern taught me something critical about capacity building assessment:

Communities often already have the knowledge and are already doing the work. What they lack are frameworks to recognize, organize, and articulate their own competence.

This has huge implications for how we think about certification:

Starting from uncertainty is valid. It is not a deficit, it is an honest baseline. Our standards should not penalize communities for admitting what they do not know.

Simple frameworks unlock existing capabilities rapidly. The teens went from uncertain to creating concrete outputs (maps, impact stories, strategic plans) in single sessions. The tool was not creating capability from nothing, it was making visible what was already there.

Appreciate diverse learning methods. Across four sessions, participants created: traditional cultural documentation (drawings, elder interviews), community risk maps, written impact stories using STAR formula, and snakes-and-ladders game boards for project planning. Each community has their own ways of enabling learning. The data standards should not standardize these methods, but allow communities to document them in their narrative report. What matters is capturing what worked and why, not forcing everyone to use the same approach.

The Documentation Challenge

One participant’s reflection stayed with me: “Even though we don’t have documents, we can still access knowledge from outside.”

For many marginalized communities, “documents” has layered meanings. Lack of legal papers means exclusion from formal systems like education. But there is another gap: these communities often lack frameworks to report their environmental stewardship in formats institutions recognize.

The irony is that they are doing sophisticated work (building fire safety infrastructure in collaboration with other organisations, running cleanups, practicing traditional ecological knowledge) but struggle to document it effectively.

This revealed what we need to address: capacity building reports usually show reach (participants, hours, etc) but not transformation.

The solution requires meeting communities in the middle. Data standards need to provide legitimacy and structure that communities can follow, while remaining reachable for those with limited resources. Bumicerts data standards should incentivize growth: communities can start with accessible assessment methods (whiteboard documentation), progress to more structured approaches as capacity increases.

What if certification required concrete evidence of change? Not bureaucratic reports, but meaningful documentation showing: what we knew, could do, and practiced before; what shifted after; what we learned.

What This Means for Data Standards

Based on this Semporna fieldwork, we are proposing standards that require two components:

Standard Metrics: The reach data (participants, duration, location, topic)

Change Evidence: Before/after documentation across knowledge, confidence, and practice. These should be captured however works for that community (verbal discussion captured in notes, drawings, maps, photos, written responses, games, videos).

The key principles:

Resource-based: Start from what participants already know and do

Change-focused: Show transformation, not just activity delivered

Context-flexible: Methods adapt to community needs

Low-barrier: Achievable with basic resources (we proved this with board and markers)

This can work as either a standalone Bumicert (certifying the capacity building program itself) or as an evidence layer strengthening larger project claims (like “community-led restoration” backed by documentation that the community actually built those capabilities).

Reflections: What I’m Still Wrestling With

On rigor vs. accessibility

How do we maintain credibility while validating low-tech, community-generated documentation? What makes a hand-drawn map or a snakes-and-ladders game board “rigorous enough”? I think the answer lies in what they demonstrate (strategic thinking, risk assessment, resource mapping, forward planning) not the format they take.

On integration

Environmental stewardship in these communities is inseparable from cultural preservation, economic resilience, community protection, and education. When teens documented traditional Bajau clothing, they were documenting ecological knowledge (materials from nature, meanings tied to environment). When they mapped disaster risks, they included social and legal vulnerabilities that compound environmental risk. Our standards cannot artificially separate “just environmental” from the holistic reality of community stewardship.

On my role

As Community Manager, I thought my job was to bring frameworks to communities. Semporna taught me it is more about bringing methods that support communities to recognize frameworks they are already using. The teens already understood vulnerability, resource mapping, and project management. They just did not have language or tools to make that knowledge visible and shareable.

What Comes Next

These data standards for capacity building programs are still being developed, informed by field research with communities practicing environmental stewardship. The approach will continue to evolve as we learn what works in different contexts.

The standards are starting points, not mandates. Other approaches beyond Know-Can-Do are valid. What matters is capturing evidence that change happened, that learning wasn’t just delivered, but received and integrated.

One of the most powerful reflections from participants: “Two-way learning—not bringing ideas, just methods.”

That is what good data standards should enable: methods for communities to document their own knowledge, capabilities, and transformation on their own terms, in formats that work for them, validated by evidence that change actually occurred.

Because if we cannot verify that capacity building creates change, we are just counting hours and bodies. And communities doing the work deserve better than that.

Note: This field research informs ongoing development of Bumicerts data standards for capacity building programs. Feedback is welcome as we continue testing and refining these approaches with diverse communities.

Great Piece! Is the STAR framework accessible to those who would want to know more about the methodology? It looks very practical for those doing place based community work